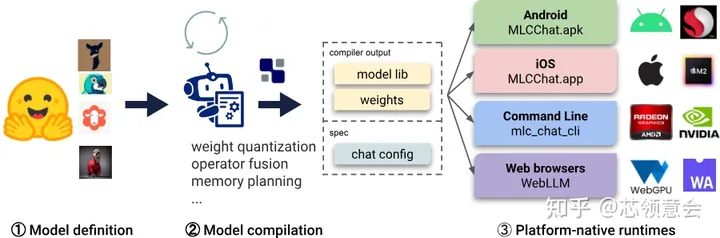

MLC LLM

submodules in MLC LLM

大模型(LLM)好性能通用部署方案,陈天奇(tvm发起者)团队开发.

项目链接

docs: https://llm.mlc.ai/docs/

github: https://github.com/mlc-ai/mlc-llm

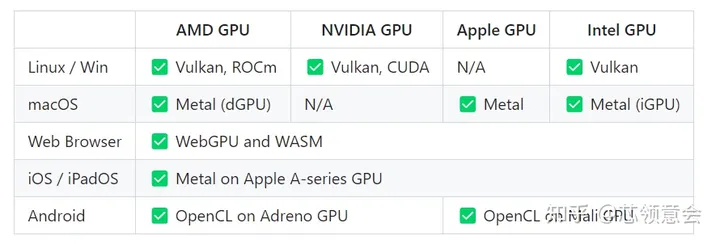

支持的平台和硬件

platforms & hardware

支持的模型

| Architecture | Prebuilt Model Variants |

| Llama | Llama-2, Code Llama, Vicuna, WizardLM, WizardMath, OpenOrca Platypus2, FlagAlpha Llama-2 Chinese, georgesung Llama-2 Uncensored |

| GPT-NeoX | RedPajama |

| GPT-J | |

| RWKV | RWKV-raven |

| MiniGPT | |

| GPTBigCode | WizardCoder |

| ChatGLM | |

| ChatGLM |

接口API 支持

Javascript API, Rest API, C++ API, Python API, Swift API for iOS app, Java API & Android App

量化(Quantization) 方法支持

4-bit, LUT-GEMM, GPTQ

ref: https://llm.mlc.ai/docs/compilation/configure_quantization.html

其他

最大的特点是可以快速部署大模型到iOS 和 Android 设备上, 浏览器上运行文生图模型(sd1.5/2.1)和大模型, 推理框架基于tvm-unity.

vLLM

快速简单易用的大模型推理框架和服务,来自加州大学伯克利分校

vLLm 运行大模型非常快主要使用以下方法实现的:

- 先进的服务吞吐量

- 通过PageAttention 对attention key & value 内存进行有效的管理

- 对于输入请求的连续批处理

- 高度优化的CUDA kernels

项目链接

docs: Welcome to vLLM!

github: https://github.com/vllm-project/vllm

支持的平台和硬件

NVIDIA CUDA, AMD ROCm

支持的模型

vLLM seamlessly supports many Hugging Face models, including the following architectures:

- Aquila & Aquila2 (BAAI/AquilaChat2-7B, BAAI/AquilaChat2-34B, BAAI/Aquila-7B, BAAI/AquilaChat-7B, etc.)

- Baichuan & Baichuan2 (baichuan-inc/Baichuan2-13B-Chat, baichuan-inc/Baichuan-7B, etc.)

- BLOOM (bigscience/bloom, bigscience/bloomz, etc.)

- ChatGLM (THUDM/chatglm2-6b, THUDM/chatglm3-6b, etc.)

- Falcon (tiiuae/falcon-7b, tiiuae/falcon-40b, tiiuae/falcon-rw-7b, etc.)

- GPT-2 (gpt2, gpt2-xl, etc.)

- GPT BigCode (bigcode/starcoder, bigcode/gpt_bigcode-santacoder, etc.)

- GPT-J (EleutherAI/gpt-j-6b, nomic-ai/gpt4all-j, etc.)

- GPT-NeoX (EleutherAI/gpt-neox-20b, databricks/dolly-v2-12b, stabilityai/stablelm-tuned-alpha-7b, etc.)

- InternLM (internlm/internlm-7b, internlm/internlm-chat-7b, etc.)

- LLaMA & LLaMA-2 (meta-llama/Llama-2-70b-hf, lmsys/vicuna-13b-v1.3, young-geng/koala, openlm-research/open_llama_13b, etc.)

- Mistral (mistralai/Mistral-7B-v0.1, mistralai/Mistral-7B-Instruct-v0.1, etc.)

- MPT (mosaicml/mpt-7b, mosaicml/mpt-30b, etc.)

- OPT (facebook/opt-66b, facebook/opt-iml-max-30b, etc.)

- Phi-1.5 (microsoft/phi-1_5, etc.)

- Qwen (Qwen/Qwen-7B, Qwen/Qwen-7B-Chat, etc.)

- Yi (01-ai/Yi-6B, 01-ai/Yi-34B, etc.)

接口API支持

OpenAI-compatible API server

分布式推理和服务(支持Megatron-LM’s tensor parallel algorithm)

可以使用SkyPilot 框架运行在云端

可以使用NVIDIA Triton 快速部署

可以使用LangChain 提供服务

量化(Quantization)方法

4-bit: AutoAWQ

OpenLLM

促进实际生产过程中的大模型的部署,微调,服务和监测.

项目链接

github: GitHub – bentoml/OpenLLM: Operating LLMs in production

支持的平台和硬件

GPU

支持的模型

| model |

| Baichuan |

| ChatGLM |

| DollyV2 |

| Falcon |

| FlanT5 |

| GPTNeoX |

| Llama |

| Mistral |

| MPT |

| OPT |

| Phi |

| Qwen |

| StableLM |

| StarCoder |

| Yi |

接口API支持 & Integrations

Serve LLMs over a RESTful API or gRPC with a single command. You can interact with the model using a Web UI, CLI, Python/JavaScript clients, or any HTTP client of your choice.

BentoML,OpenAI’s Compatible Endpoints,LlamaIndex,LangChain, andTransformers Agents.

量化(Quantization)方法

- LLM.int8(): 8-bit Matrix Multiplication through bitsandbytes

- SpQR: A Sparse-Quantized Representation for Near-Lossless LLM Weight Compression through bitsandbytes

- AWQ: Activation-aware Weight Quantization,

- GPTQ: Accurate Post-Training Quantization

- SqueezeLLM: Dense-and-Sparse Quantization.

支持多个Runtime, 主要为使用 vllm 和 pytorch backend.

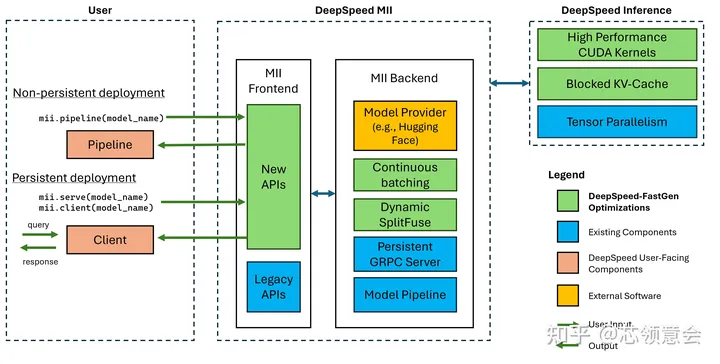

DeepSpeed-MII

MII architecture

针对DeepSpeed 模型实现的,专注于高吞吐量,低延迟和成本效益的开源推理框架

MII(Model Implementations for Inference) 提供加速的文本生成推理通过Blocked KV Caching, Continuous Batching, Dynamic SplitFuse 和高性能的CUDA Kernels, 细节请参考:https://github.com/microsoft/DeepSpeed/tree/master/blogs/deepspeed-fastgen

项目链接

https://github.com/microsoft/DeepSpeed-MII

支持的平台和硬件

NVIDIA GPUs

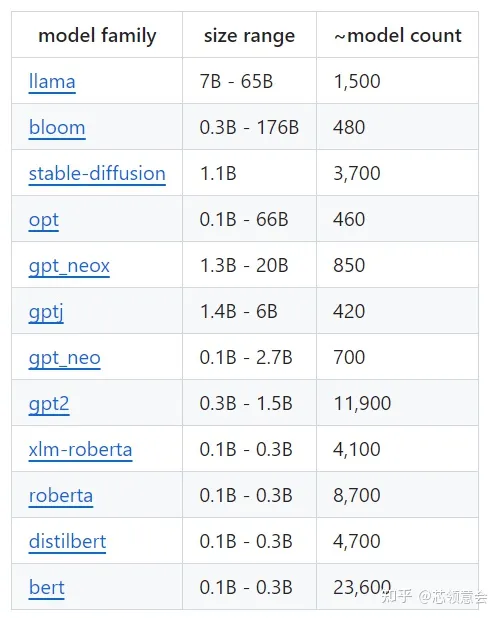

支持的模型

MII model support

接口API支持

RESTful API

TensorRT-llm

组装优化大语言模型推理解决方案的工具,提供Python API 来定义大模型,并为 NVIDIA GPU 编译高效的 TensorRT 引擎.

TensorRT-LLM is a toolkit to assemble optimized solutions to perform Large Language Model (LLM) inference. It offers a Python API to define models and compile efficientTensorRTengines for NVIDIA GPUs. It also contains Python and C++ components to build runtimes to execute those engines as well as backends for theTriton Inference Serverto easily create web-based services for LLMs. TensorRT-LLM supports multi-GPU and multi-node configurations (through MPI).

项目链接

docs: https://github.com/NVIDIA/TensorRT-LLM/tree/main/docs/source

github: https://github.com/NVIDIA/TensorRT-LLM

支持的平台和硬件

NVIDIA GPUs (H100, L40S, A100, A30, V100)

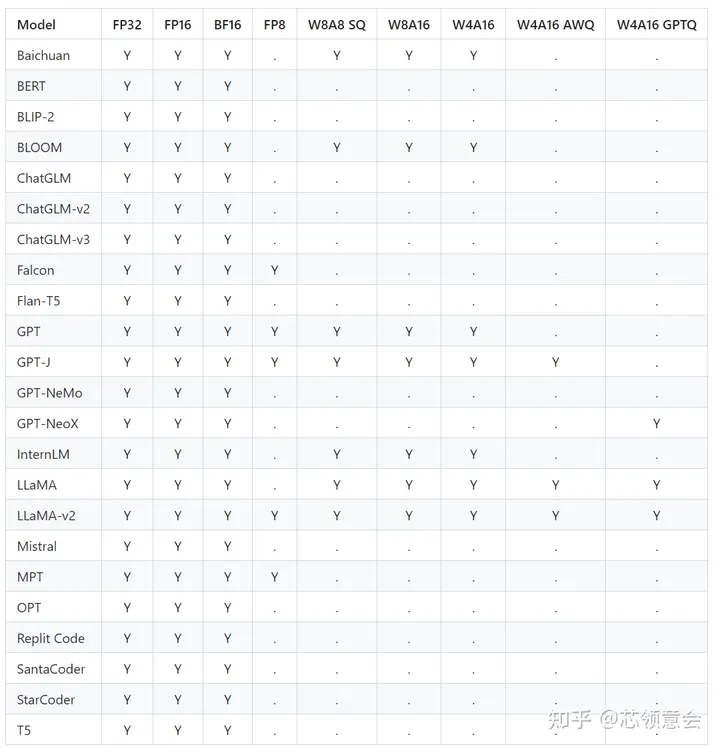

支持的模型

- Baichuan

- Bert

- Blip2

- BLOOM

- ChatGLM

- Falcon

- Flan-T5

- GPT

- GPT-J

- GPT-Nemo

- GPT-NeoX

- InternLM

- LLaMA

- LLaMA-v2

- Mistral

- MPT

- mT5

- OPT

- Qwen

- Replit Code

- SantaCoder

- StarCoder

- T5

- Whisper

接口API支持

Python API, Pytorch API, C++ API, NVIDIA Triton Inference Server,

量化(Quantization)方法

INT8 SmoothQuant (W8A8), NT4 and INT8 Weight-Only (W4A16 and W8A16), GPTQ and AWQ (W4A16), FP8 (Hopper)

ref: https://github.com/NVIDIA/TensorRT-LLM/blob/main/docs/source/precision.md

其他

TensorRT-LLM 主要特色:

暂无评论内容